Mouse Grooming Behavior Data

Neural Network Annotated Training Data

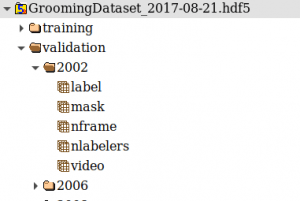

All training data is contained within a single HDF5 file. This format is similar to having individual files (called datasets) inside folders (called groups).

Interaction with this data is easiest using either HDFView or programmatically with H5PY.

The structure of the data is as follows:

- First level grouping is Train/Validation split

- Second level grouping is by Video Clip

- Each video contains 5 datasets

- nframe

- Number of frames in this video

- Shape: 1

- video

- Raw Video

- Shape: nframes x 112 x 112

- label

- Labels for each frame

- 0 = not grooming, 1 = grooming

- Shape: nframes

- Labels for each frame

- mask

- Information for whether or not annotators agreed

- 0 = disagree, 1 = agree

- When annotators disagree, label contains the values from the first person to annotate the frame

- Shape: nframes

- Information for whether or not annotators agreed

- nlabelers

- Number of annotators that have labeled the video clip

- Shape: 1

- nframe

Link to the annotated dataset:

ftp://ftp.jax.org/kumarlab/2021_Grooming/GroomingDataset_2017-08-21.hdf5

A copy of this annotated dataset is also available at Zenodo:

https://zenodo.org/record/4646088

Neural Network Training Code

We distribute the code we used for creating a dataset as well as training individual models.

https://github.com/KumarLabJax/MouseGrooming

Please read the documentation over on github for further information.

Neural Network Inference Code

Since we combine multiple networks to form a consensus, we share the code to take that combined network and apply it to new videos. Additionally, since the training dataset contains cropped 112×112 frames of the mouse, we also attach our tracking network to the front such that this inference code operates on 480×480 videos.

The code we use to run inferences is on our github: https://github.com/KumarLabJax/MouseGrooming/tree/master/Inference

We also release the singularity container containing the our tracking and grooming model along with the inference code in a simple-to-use format:

ftp://ftp.jax.org/kumarlab/2021_Grooming/GroomingInferRelease.sif

Example usage: singularity run –nv GroomingInferRelease.sif Input_Movie.avi

The input movie must be a 480×480 video and appear visually similar to our training dataset. Since we do not employ distortion augmentation in this model, even slight differences in lighting can cause issues with performance.

A copy of the inference code in the singularity container is also available in our Zenodo project:

https://zenodo.org/record/4646088

Example Videos

We share some example video data in our paper supplements:

https://elifesciences.org/articles/63207/figures#fig3video1

Additional videos can be viewed by selecting the arrow keys to seek through our 9 video supplements.

Behavior Data

Data is available in the Mouse Phenome Database.

MPD identifiers: Kumar3 or MPD:1051

https://phenome.jax.org/projects/Kumar3